The risk of vibes-based software engineering is building a house of cards at 10x speed

Unless you can test the code rigorously at the same speed, you are not truly improving productivity for high-value code

One important premise before I go too deep with today’s post: I am a paying user of Cursor, and a big fan of the tool, so everything I’m going to say is not targeting Cursor but intended to offer a more generic reflection.

We are entering the era of “vibes-based software engineering”, and the more I look into it the more I find it terrifying.

In a recent social media campaign, Cursor went all-in pushing for a simple idea: if the AI can build the feature in 30 seconds and the demo works, why spend 30 minutes reviewing the diff?

The problem they are trying to address is quite visible to software developers: it’s far more time-consuming to review code than it is to write it.

If the AI produced 1,000 lines of code in the blink of an eye, the human reviewer is faced with a choice:

Trust the demo, ship the code, and hope there isn’t a memory leak or a security hole;

Spend more time meticulously auditing the AI’s output than it would have taken to just write it manually.

Research from early 2025 (LogRocket/CodeRabbit) shows that senior engineers now spend an average of 4.3 minutes reviewing an AI-generated suggestion, compared to just 1.2 minutes for human-written code: because AI doesn’t “understand” your specific architecture, it produces defensive but bloated patterns that are exhausting to audit.

Code generation is 10x faster, but code verification is 3x harder.

According to the Sonar 2026 State of Code Report:

61% of developers agree that AI often produces code that “looks correct but is not reliable”;

96% of developers admit they do not fully trust that AI-generated code is functionally correct;

Yet, the volume of code is exploding! GitHub’s Octoverse 2025 reported a 29% surge in merged PRs, while human review capacity remained flat. We are rubber-stamping the collapse of our own systems.

A landmark Randomized Controlled Trial (RCT) by Metr.org (July 2025) found that experienced developers took 19% longer to complete tasks when using AI tools. Those same developers believed they were 20% faster.

What is going on here?!

We are so enamored by the “magic” of the demo that we don’t even notice the hours we spend fixing the subtle hallucinations hidden in the diff?

Now, imagine a bank reviewing a new transaction ledger based on a “demo.”

“Look, the numbers moved from Box A to Box B! It works!” Meanwhile, the underlying logic doesn’t meet compliance and includes zero error handling.

It’s the AI-driven version of Schrödinger’s cat experiment: the code is both working and broken until someone hits it in production.

A 2025 study found that AI-generated code carries a vulnerability rate of ~50%, compared to 15–20% in traditional human-written code. For critical applications, a “demo” as a user test is a no-go in my opinion.

A point I bring up often is that we need better ways to verify, not just to write.

Everyone is focused on the speed of writing software but unless you can also test it rigorously at the same speed, you are not truly improving productivity for high-value code.

I wholeheartedly believe this entire idea of being content with the demo rather than with the diff is an absolute disgrace.

Is “working” enough now? Aren’t we just building a house of cards at 10x speed?

The industry seems stuck in perception: we feel 20% faster, but we are actually 19% slower.

As usual, if you enjoy reading Consulting Intel, please do me a favor: spread the word and share this post.

👋

👀 Links of interest

A few corners of the internet you may find interesting:

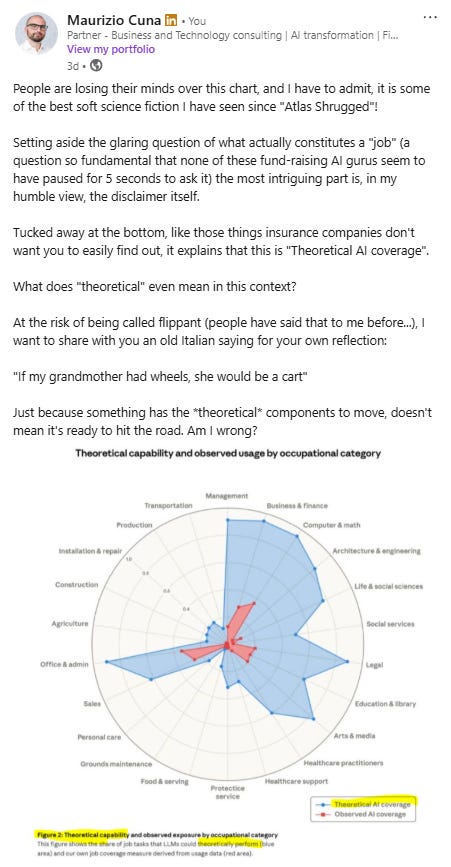

A recent post I published on LinkedIn was quite well received, so I thought I should propose it here as well. As usual, feel free to connect with me on LinkedIn (always great to see you there too!):

I had the opportunity to try the AI-slide builder Perceptis ahead of their public launch, and I was so impressed! Unlike other apps I have tested before, this one actually builds good slides with a structure, starting from a simple prompt. Try it for 10 minutes and let me know what you think.

My first book Beyond Slides became a #1 Amazon Best Seller in the USA, UK, Australian and Italy. This is a message I received the other day from a new reader:

Have you looked into the Leaders Toolkit? It is a deck of 52 tools, frameworks and mental models to make you a better leader (use code CONSULTANT10 for 10% off);

The Consulting Intel private Discord group with 250+ global members is where consultants meet to discuss and support each other (it’s free).